Most of us understand how fingerprinting works, where we compare a captured fingerprint, from a crime scene for example, to a live person’s fingerprint to determine if they match. We can also use a fingerprint to ensure that the true owner, and only the true owner, can unlock a smartphone or laptop. But could a fake fingerprint be used to fool the fingerprint sensor in the phone? The simplest answer is yes unless we can determine if the fingerprint actually came from a living and physically present person, who might be trying to unlock the phone.

In biometrics, there are two important measurements, Biometric Matching and Biometric Liveness. Biometric matching is a process of identifying or authenticating a person, by comparing their physiological attributes to information that had already been collected. For example, when that fingerprint matches a fingerprint on file, that’s matching. Liveness Detection is a computerized process to determine if the computer is interfacing with a live human and not an impostor like a photo, a deep-fake video, or a replica. For example, one measure to determine Liveness includes determining whether the presentation occurred in real-time. Without Liveness, biometric matching would be increasingly vulnerable to fraud attacks that are continuously growing in their ability to fool biometric matching systems with imitation and fake biometric attributes. Attacks such as “Presentation Attack”, “spoof”, or “bypass” attempts would endanger a user without proper liveness detection. It is important to have strong Presentation Attack Detection (PAD) as well the ability to detect injection attacks (where imagery bypasses the camera) as these are ways to spoof the user’s biometrics. Liveness determines if it’s a real person while matching determines if it’s the correct, real person.

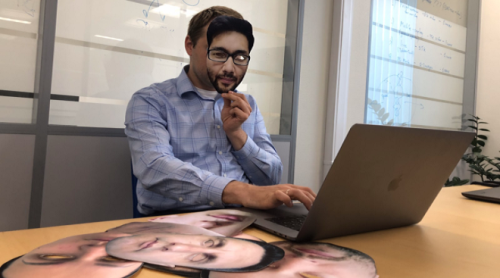

With today’s increasingly powerful computer systems, have come increasingly sophisticated hacking strategies, such as Presentation and Bypass attacks. There are many varieties of Presentation attacks, including high-resolution paper & digital photos, high-definition challenge/response videos, and paper masks. Commercially available lifelike dolls are available, human-worn resin, latex & silicone 3D masks, as well as custom-made ultra-realistic 3D masks and wax heads. These methods might seem right out of a bank heist movie, but they are used in the real world, successfully too.

There are other ways to defeat a biometric system, called Bypass attacks. These include intercepting, editing, and replacing legitimate biometric data with synthetic data, not collected from the persons biometric verification check. Other Bypass attacks might include intercepting and replacing legitimate camera feed data with previously captured video frames or with what’s known as a “deep-fake puppet”, a realistic-looking computer animation of the user. This video is a simple but good example of biometric vulnerabilities, lacking any regard for Liveness.

The COVID19 Pandemic provides significant examples of Presentation and Bypass attacks and resulting frauds. Pandemic Stay-at-Home orders, along with economic hardships, have increased citizen dependence on the electronic distribution of government pandemic stimulus and unemployment assistance funds, creating easy targets for fraudsters. Cybercriminals frequently utilize Presentation and Bypass attacks to defeat government website citizen enrolee and user authentication systems, to steal from governments across the globe which amounts in the hundreds of billions of losses of taxpayer money.

Properly designed biometric liveness and matching could have mitigated much of the trouble Nevadans are experiencing. There are various forms of biometric liveness testing:

- Active Liveness commands the user to successfully perform a movement or action like blinking, smiling, tilting the head, and track-following a bouncing image on the device screen. Importantly, instructions must be randomized and the camera/system must observe the user perform the required action.

- Passive Liveness relies on involuntary user cues like pupil dilation, reducing user friction and session abandonment. Passive liveness can be undisclosed, randomizing attack vector approaches. Alone, it can determine if captured image data is first-generation and not a replica presentation attack. Significantly higher Liveness and biometric match confidence can be gained if device camera data is captured securely with a verified camera feed, and the image data is verified to be captured in real-time by a device Software Development Kit (SDK). Under these circumstances both Liveness and Match confidence can be determined concurrently from the same data, mitigating vulnerabilities.

- Multimodal Liveness utilizes numerous Liveness modalities, like 2 dimensional face matching in combination with instructions to blink on command, to establish user choice and increase the number of devices supported. This often requires the user to “jump through hoops” of numerous Active Liveness tests and increases friction.

- Liveness and 3-dimensionality. A human must be 3D to be alive, while a mask-style artifact may be 3D without being alive. Thus, while 3D face depth measurements alone do not prove the subject is a live human, verifying 2-dimensionality proves the subject is not alive. Regardless of camera resolution or specialist hardware, 3-dimensionality provides substantially more usable and consistent data than 2D, dramatically increasing accuracy and highlights the importance of 3D depth detection as a component of stronger Liveness Detection.

Biometric Liveness is a critical component in any biometric authentication system. Properly designed systems require the use of liveness tests before moving on to biometric matching. After all, if it’s determined the subject is not alive, there’s little reason to perform biometric matching and further authentication procedures. A well-designed system that is easy to use allows only the right people access and denies anybody else.

Care to learn more about Facial Biometrics? Be sure to read our previous releases Exploring Facial Biometrics. What is it? and Facial Biometrics – Voluntary vs Involuntary.

About the authors:

Jay Meier is a subject matter expert in biometrics & IAM, and an author, tech executive, and securities analyst. Jay currently serves as Senior Vice President of North American Operations at FaceTec, Inc. and is also President & CEO of Sage Capital Advisors, LLC., providing strategic and capital management advisory services to early-stage companies in biometrics and identity management.

Meyer Mechanic is a recognized expert in KYC and digital identity. He is the Founder and CEO of Vaultie, which uses digital identities to create highly fraud-resistant digital signatures and trace the provenance of Legal and financial documents. He sits on DIACC’s Innovation Expert Committee and has been a voice of alignment in advancing the use of digital identity in Canada.

Additional contributions made by members of the DIACC’s Outreach Expert Committee including Joe Palmer, President of iProov Inc.